Hello again and welcome to our (very delayed) monthly round-up for April. I intended to publish this closer to the start of May but life got in the way I’m afraid. It takes a while to put these posts together but I think it’s worth doing so I wanted to get this out before we start on the one for May.

We reached a few hefty milestones in April that are the culmination of several months’ of determination so it’s nice to see them bear fruit. I hope you’ll be as fired-up about the news as we are.

As always, the online demo has the latest code so you can play with the new features there.

Docker images for ARM/Raspberry Pi

It’s been several months in the making and a lot more work than originally expected but we now have releases for ARM-based machines like the Raspberry Pi. This means that for around $35 you can have a low-powered server running Photonix. You’d probably want to connect an external hard drive and we’d recommend going for the larger RAM versions if you can spare the money.

If you have another ARM-based machine such as Banana Pi, Orange Pi or Odroid we think they should work but we’d love to have someone test and let us know how you get on via the issue tracker.

We currently build 2 ARM Docker images — ARM32v7 and ARM64v8. These should be compatible and optimised for most machines currently in use. You can find these builds on our new Docker Hub repository.

These Docker images are built through a new GitHub Actions set-up based on Docker buildx. For each tagged version created in the Git repo, all three architectures are built for. This should be quite easy to roll out to other architectures supported under Docker buildx. The installation instructions have been updated and the example docker-compose.yml file works for all supported platforms.

There were a few tricky areas when building for ARM. If you’re interested in doing something similar this is what we came up against. Warning: this gets pretty technical so you may want to skip on over to the next section.

-

Python packages that do a lot of computation use compiled C code for high performance. These are libraries like Numpy, Scipy, Matplotlib, Psycopg. These generally have pre-compiled Wheel packages (.whl) for common OSs on the x86/amd64 architecture which get installed quickly but for other architectures, compilation has to be done when installed (e.g. via

pip). This could take many hours during the Docker build stage and any error and version number change can mean starting again if you’re not careful. My solution to this was to create a private PyPI repository and script to upload compiled Wheel packages as soon as they’re built. This effectively gives us a cache and each version of a dependency only has to be built once for each platform. This script handles all building, uploading and installation of Python packages and the .pypirc and pip.conf set-up the custom PyPI repository. I would use this technique again even just for x86/amd64 platform if I wanted to base off one of the lightweight Alpine Docker images as Alpine uses musl rather than glibc as it’s C standard library which also causes a lot of Python packages to be compiled. -

Following on from the previous point on compilation, Tensorflow takes things to the next level of pain. There are a few provided builds for Raspberry Pi but not for the latest version and not the version of Python we’re using. You need to have Bazel (a build tool) installed at the right version. It can take over a day to build on an average laptop (each time you try). The CI build scripts for Raspberry Pi/ARM needed a bit of tweaking. To standardize the builds and make them repeatable I created a new tensorflow-builder repo with Docker-based scripts. You can read more about the process in our Building Python Dependencies documentation. To build quicker and iterate on fixing errors I found it best to spin up a 32 CPU VPS machine on DigitalOcean (referral link benefits us both). This can build Tensorflow for a single architecture in about an hour. Just remember to shut the machine down afterwards as the one I chose would cost $640 for a whole month!

-

Next I encountered some weirdness in the way Pip behaves. Pip is the most common Python package manager and we make use of it during the Docker build stages. When packages need compilation, pip creates an isolated build environment and installs the dependencies that the package asks for. The version numbers are likely to be different to the versions that we want — for example we want a specific version of Numpy as is required by Tensorflow but compiling Matplotlib picks another version of Numpy. I tried pip arguments such as

--no-build-isolationbut in the end my workaround was to carefully orderrequirements.txtand callpip installfor each line in there. In this case Matplotlib gets compiled against the version of Numpy that is already installed in the environment. I was quite surprised that I had to do this. If you have any better solutions I’d like to hear about them. -

Finally there were some inconsistencies between architectures using Docker buildx. I’m not sure if this was caused by QEMU emulation, differences in the Python Docker images we use as a base or something else. The most obvious place where this happened was where the NodeJS and Yarn packages get installed. This caused some errors installing Deb signatures so I had to find an alternative method that worked in all the architectures used with Docker buildx.

Mobile apps approved

GitHub issue #151

Some exciting news following on from last month’s update — the Android and iOS apps both got accepted into the Google Play and Apple App Stores, respectively.

To reiterate, these are early versions of our mobile apps and we want to develop them a lot further. Having the first versions accepted means that the pipelines are established and further updates should go out without a hitch.

You can download and review ;) our apps here:

The next piece of work we’ll be undertaking on the mobile apps is automatic image uploading which should bring us in line with the likes of Google Photos and iCloud.

The code for the mobile app is also open source and you can get stuck into the code repo for it if you are that way inclined. It’s based around React Native and Expo tooling.

Allow users to select the preferred Photo version

GitHub issue #193

Photonix understands that for a single photo, you might have several files. You could have the raw file (if your camera produces them), an original JPEG produced by the camera and possibly several edits you made using Photoshop, Lightroom, DarkTable, Photivo, RawTherapee, etc.

By looking at the “taken at” date in metadata we are able to group these files together so you don’t see duplicates. We make an educated guess about which file you’ll want to see as the main representation of these files (thumbnails and detail). Now you also have the option to change which file is your preferred version. When you click a thumbnail and get to the detailed view you can open the metadata info panel using the “i” icon in the top-right corner. If there are multiple files available for the photo you’ll see a drop-down select menu with the other files listed.

We believe grouping files like this makes us different to most other photo organizers and caters more towards keen amateur and professional photographers. Let us know what you think about this. I’m sure in time we can add more options around this behaviour.

Paginated thumbnail loading

GitHub issue #215

This is a much-needed performance optimisation that became apparent as soon as you imported more than a few hundred photos. Now instead of trying to get all your photo thumbnails at once, we fetch them in chunks of 100 at a time. As you scroll down, another “page” of results is downloaded and added to the bottom. There’s much more work we want to do with regard to thumbnail loading — making them “scrubbable”, but this gets us to a workable situation in the meantime.

Handle moving/renaming files

GitHub issue #188

This should be a fairly simple feature to explain — If you move files around into different folders or rename them Photonix will realise that the files are the same. Up until now moving a file would look to the system like one file got deleted and then a new one appeared. Photonix’s database record of a Photo is now updated when a move happens and this means any tags or other metadata remain intact.

Other photo managers force you to give the application control over the photo storage folder structure. We wanted to be less intrusive and let you continue to organise files in the way you’re used to but this takes a bit more effort on our part.

Swipe left and right between photos

GitHub issue #220

This continues on from the feature “Go directly to next or previous photo” #171 from last month. Allowing the user to skip from one photo to the next was discoverable on desktop due to hover gestures showing arrow icons when the cursor moves over that area of the screen. We didn’t want the arrow icons to always be visible on phones and other touch screens (that don’t support hover gestures) because they have limited space already, Therefore we implemented a more intuitive method by handling swipe gestures. This had to work alongside the existing feature “Gesture-enabled zooming and panning of images” #153 which was also introduced last month.

Star ratings and subject tags imported from metadata

GitHub issue #235

If you’ve been using other applications to tag or star rate your photos, Photonix is now able to import them. This means you’ll see star ratings in the timeline view and be able to search and filter by the stars and tags too. You’ll need to make sure that whatever tool you previously used is configured to write this metadata back to the original files rather than just storing it in the application database. This can usually be found in the settings.

This should be able to handle files tagged from the following management applications: DigiKam, Shotwell, DarkTable, Gnome Photos, Photivo, F-Spot, Adobe Lightroom, Picassa and more.

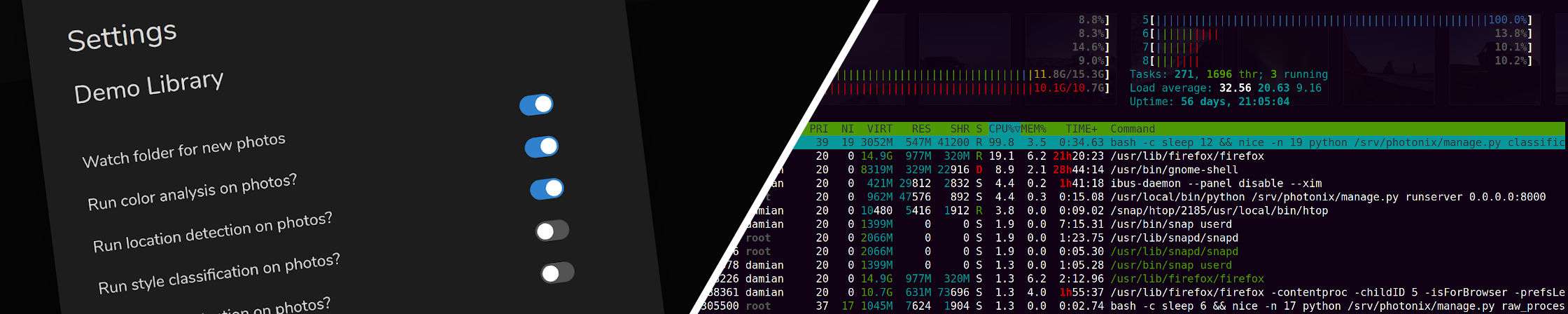

Allow types of analysis to be switched on or off

GitHub issue #152

Through developing in public we found that some users with large collections and low powered devices like the Raspberry Pi were struggling to import their collection with all the image analysis features turned on. When we guide the user through setup the first time we allow them to choose which types of analysis should be run out of Color analysis (fast), Location identification (fast), Style recognition (medium) and Object detection (slow). These features can also be turned on or off with near immediate effect from within the settings dialogue. The slowest analyser (object detection) can take over 10 seconds per image which can really add up.

These analysis jobs run in the background with the lowest operating system priority but can still cause some responsiveness issues through RAM or disk access. These settings are attached to each library so you can enable analysers for just some libraries that you have.

An example way you might want to manage a large import would be to turn off colour analysis (or even all of the analysers), add your photos, and then after you’re happy everything’s imported, start turning analysis on (maybe overnight).

Throttling on search typing

GitHub issue #219

This is a small fix using a common technique for search. The initial implementation was performing a new search for every character the user typed in the search box. If a user typed very quickly then they could be hitting the server with many searches per second and cause excessive UI updating/flashing. Now we use the throttle-debounce library to only perform the search when the user has paused typing for a few hundred milliseconds (debounce).

Bug fixes

- Same image returned multiple times when it has the same tag multiple times #224

- GraphQL error: Float division by zero (metadata exposure set to zero) #216

- Django security key randomisation #237

- Apollo GraphQL error on page load when JWT token expired #233

- Import fails when image has camera model but no camera make #196

Coming soon…

- Progress notifications #192

- Allow manual rotation of photos #211

- Commands for creating users and assigning them to libraries #194

- Autocomplete when search string matches available tags #226

I hope you found something of interest in this round-up. You shouldn’t have to wait very long for the next update either way.

Thanks for reading,

Damian

Header photo by Louis Mornaud

Raspberry Pi 4 photo by Vishnu Mohanan

Raspberry Pi Zero photo by Harrison Broadbent

Hard drive photo by Benjamin Lehman