Welcome back to another bumper round-up of what we achieved in Photonix during March. I’m again pretty happy with the progress Gyan and I have made on the project. It’s exciting to see that we are getting close to a release that contains all the main features so stay tuned for some big announcements in the near future.

As ever the demo version of Photonix is up-to-date with the latest code so please do go and check it out.

Let’s get stuck into the new features…

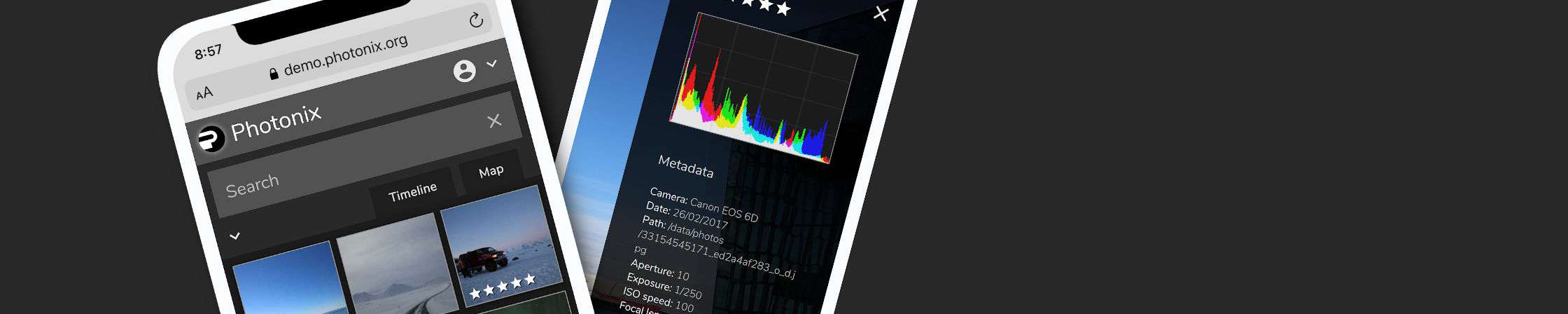

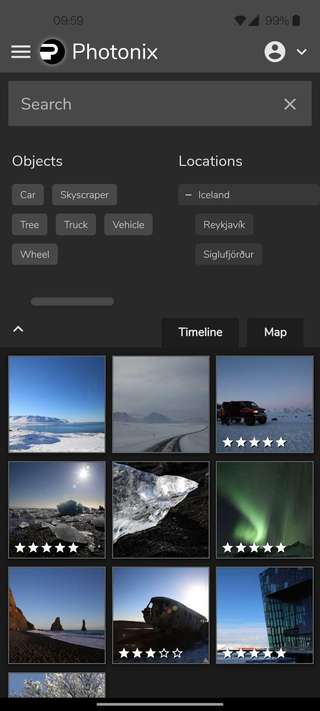

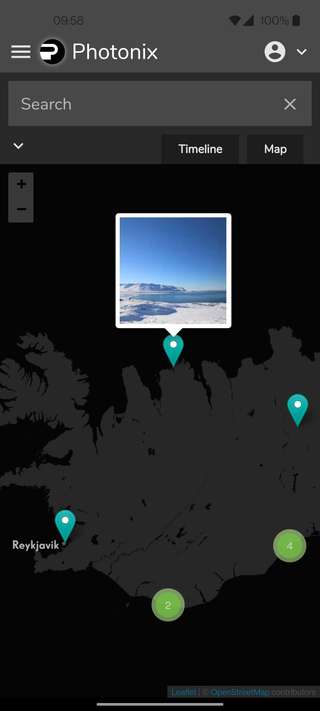

Mobile layout

I had a list of requirements and a user experience in mind for how Photonix should behave on mobile devices. We also received some helpful feedback from reviewers on Reddit last month about what things they were expecting. Quite a bit of polishing has gone on since last month and I hope you find the experience a lot more intuitive and meeting of expectations.

These were the main points I tackled from a mobile perspective although a lot of the other features included in this post also contribute to this.

- Grid layout of thumbnails means less wasted space on narrower screen sizes.

- App start with search area collapsed on smaller devices to allow more space for thumbnails. It remembers the state of expansion for next time.

- Safe area detection to make sure icons on photo detail screen are always visible/tappable on mobile devices that have notches/cut-outs for cameras.

- New info/metadata panel which is more discoverable and accessible on mobile devices. See the “i” icon in the top-right corner of the photo detail view.

- Layout bug fixes that were specific to Safari on iOS for scrolling the thumbnail view.

Gesture-enabled zooming and panning of images

This is related to the mobile layout refinements mentioned above but also benefits the desktop experience too.

When you’re looking at an individual photo, you are now able to zoom into the image to see it up close. On mobile/multi-touch devices you can use the familiar 2-finger pinch to zoom gesture. Naturally, you can pan to other areas too by moving it with your finger. This is a very familiar interaction and definitely feels more like you would expect from a native app.

On desktop devices you can also use the mouse/touchpad scroll wheel/gesture to zoom in and out. We think this feels quite natural where touch is not available.

We used a library called react-zoom-pan-pinch which helpfully gives us all these features in a very performant way so we’re very thankful to that project’s developers.

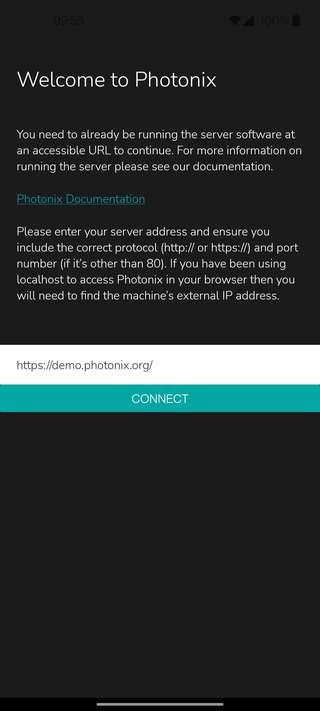

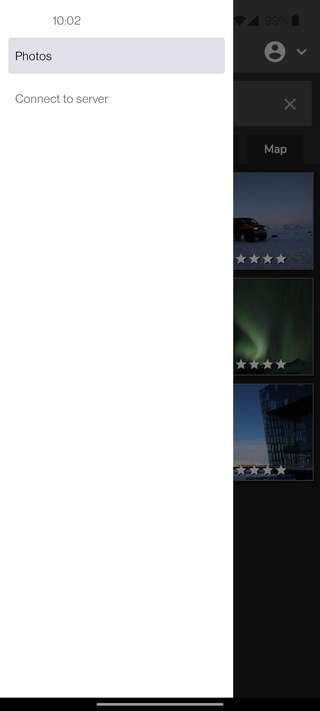

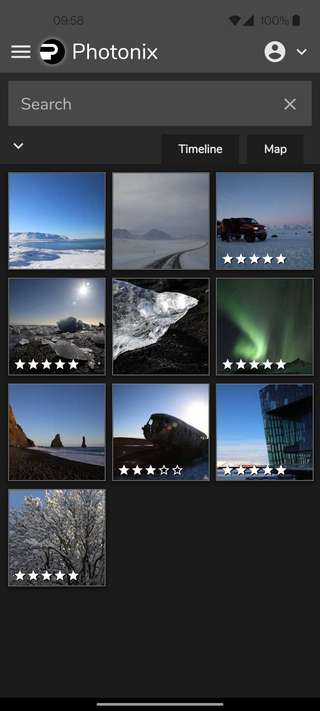

Mobile apps for Android and iOS

The mobile apps are well under way and the first version is now ready. There was a lot of testing and tweaking that needed to be done in combination with the other mobile layout issues mentioned above.

To avoid duplication of work, the main JavaScript web app is made to work as well as possible on mobile devices. The web app then becomes the base that is wrapped up inside the native apps. This has some downsides compared to writing completely native apps but the massive upsides are that there is a lot less code to maintain, we can move more quickly and all platforms (web/desktop/Android/iOS) will have feature parity. Expo and React Native frameworks were chosen as it keeps the code for the native features the same between Android and iOS and abstracts away a lot of platform intricacies.

There are, and will be more, parts that only exist in the native app. When you start the mobile app for the first time you will be asked to provide an address for your server. As people can host their own server at any URL the app remembers and connects to it in future. There is a native menu that lets you go and change that server if you need to in future. Other items will be added to this menu soon.

Here are some screenshots of the app running under Android (iOS version looks very similar).

A native feature that you can expect soon after this first release will be to enable auto-uploading of photos taken on your phone. I think this is important as people have come to expect this with tools like Google Photos and iCloud. Being a native app instead of just a web app allows us to read the camera roll (if given permission) and upload new photos in the background. This will give people peace of mind backing-up and removes the need to manually copy files around.

The apps have been built and tested on several Android and iOS devices and I think they are ready to be used by people who already have the latest version of Photonix server running. I’m currently making them available in the app stores which is taking a little while. Google Play is currently reviewing the uploaded version and will make it available as soon as it’s complete. On the Apple side, I’ve enrolled in their developer platform and should be able to submit for review shortly.

Go directly to next or previous photo

Before adding this feature, if you were looking at the detailed view of a photo you would have to go back to the thumbnail list to select another photo to look at. With this now implemented you can go directly to the photo before or after by using the left/right keyboard arrow keys or hovering over the left or right edges of a photo to reveal clickable icons.

The next/previous photos are linked to the search and filtering you have applied on the previous screen rather than cycling through all photos in the library. This is good as it means you can select a tag to filter to a specific person, object, color etc. and then quickly flip through the results.

There is some extra work scheduled for the coming month to make this transition better on mobile devices. Users have come to expect being able to use swipe gestures to the left or right to go to the previous or next rather than tapping the edges of the screen. This will give the app a more native feel.

Loading transitions for detailed photos

There was some ugliness when moving from the search/thumbnail page to the detailed view of a photo. A loading spinner appeared previously but it was only shown when loading the metadata about a photo then showed a blank screen for a while. Now the transition is smooth and the spinner shows until the photo has completely loaded.

It’s nice to think we’re at a point where we can work on fine adjustments and think about the user experience more. This is a transition that is going to be seen a lot by users so it’s worth getting it right.

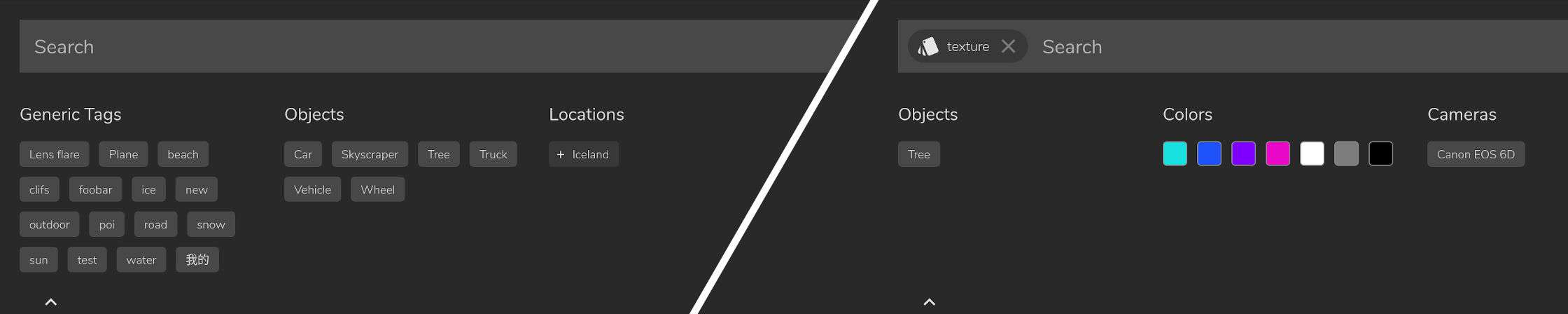

Faceted search

You’ve probably encountered faceted search before but might not know it by name. As you select filters, the remaining filters get reduced down. This has the effect that you can never apply another filter that would result in zero results. The remaining filters are always contained somewhere in the remaining photos. The benefit is that you can get to the photos you are looking for more quickly.

There are currently not many photos on the demo site but you can sample this feature if you select the “texture” style. You will notice that there are fewer available colors, fewer object tags and entire groups such as “Location” are removed as they would not assist in reducing the results further.

Date filtering by string

Staying on the same topic of search, this feature lets you query in the dimension of time. It’s not the most discoverable feature but if you type a month or year you’ll quickly see it working. You can search other filters/tags at the same time — the backend will recognise which parts are dates. There’s not much to say about how it works but I think it’s useful to give a few examples that work on the demo site:

2017— Show all the photos from this yearmar 2017— Just photos taken in March 2017truck in march 2017— Photo tagged with a truck taken in March 201728th feb 2017— Photos from a particular day

CR3 file format support (Canon Raw v3)

Anyone who owns or will own a future Canon camera will be thankful for this feature. For those that have never heard of raw photos before — these are files that come from the camera’s sensor more directly and have very little processing applied. This gives higher bit depth and more control when editing a photo using applications like LightRoom, Darktable or RawTherapee. The downside to raw files is that most camera manufacturers invent their own formats leave it up to others to try and work out how to decode them.

The user @TaylorBurnham realised that the raw files from their Canon camera were showing as blank thumbnail images within Photonix and added an issue describing it. They then went on to investigate further and write a solution which I’ve gratefully now merged in.

Raw files often have a “thumbnail” embedded in them which is a full resolution JPEG. This is handy as we then have a file we can use for scaling, displaying in a browser and running analysis algorithms on. Extracting this image, when available, means we get the image that is processed by the camera with the configured white-balance, saturation and other settings. Where a raw file doesn’t embed a JPEG we have to perform a more advanced processing step. This extraction and processing is carried out by dcraw and it handles most file formats from most manufacturers — we just have to provide different arguments to it.

Canon’s CR3 is a newer format superseding their previous CR2 format. The quirk here is that CR3 files have a thumbnail embedded but it’s not in JPEG format. You can see from Taylor’s research that there has been quite a struggle among application developers to decode the embedded thumbnail. In the end a special case had to be added for this file type and a different tool exiftool (which we already included) was used to process to a JPEG.

We have it on our radar to explore other raw processing options in future which might be better maintained, such as LibRaw.

Thumbnail service changes

The way thumbnails are generated was overhauled last month. Generating smaller thumbnails means images on the search and detail page can load quickly. Previously these were generated only at import time. Here we refer to any resized image as a thumbnail — this is every photo you see in Photonix, not just the small square ones.

The new thumbnailer service can generate thumbnails on demand when needed, accounting for the following scenarios:

- There are multiple versions of a photo and the user has selected a different one to be the primary.

- The cache folder got destroyed during a migration or to save space.

- We may decide in future to use different sized images in different parts of the UI or on different devices to save on download time and data.

Aside from the introduction of this service, the actual algorithm doing the scaling has been improved. It’s quite complicated to explain but most of the main code libraries for resizing images have flaws. This is most notable as dark areas becoming darker when images are scaled down to a small size. The trick is to correct for gamma in the colorspace of the image (typically sRGB). You can read more about the effect along and see examples at the ImageWorsener site.

The new algorithm takes longer to generate thumbnails but as the effect is only apparent in small thumbnails, that’s where we use it. It doesn’t take much more time to apply the extra transformations on these small images anyway. Our solution was adapted from this Stack Overflow answer. Thanks Nathan Reed — your solution is much neater (and faster) than my solution in the same discussion.

Filtered results sorted by significance

This feature improves the results of filtering and searching. When our analysis algorithms detect features in an image we tag it with a significance score between 0 and 1. This is calculated in different ways depending on the algorithm. Things that can affect the significance score can be:

- Size of the feature relative to the whole image, e.g. object bounding box or number of pixels that match a certain color.

- How confident the analyser is of what it has detected, e.g. object detector might be 80% sure it found a cat.

- If a user has tagged an image then we give a significance of 1.0 as the user must know what the photo is of.

Now that significance score gets taken into account in search you are more likely to see the most relevant results first. A quick example you can try out on the demo site is to filter by the color black. You will see a black sand beach first followed by images with less and less black in them.

File path and metadata display

These couple of checklist items finish up a long-standing ticket about the photo detail view. If a user is looking for some specific information generated by the camera when the photo was taken, they can view everything in the Photonix UI. For convenience we also show the full path of the image. I doubt this will be used all the time but saves having to switch to another tool and could help diagnose issues.

Extended GraphQL test coverage

Given that there had been a lot of extra API data made available to the UI, it made sense to do a full pass over the existing GraphQL tests and add new ones. This has brought our coverage back up to a decent level, from 80% to 88%.

You can see how we track code coverage on Codecov.

Ignore Synology thumbnails

The user Yurij Mikhalevich ran Photonix on their Synology NAS and found that there were undesirable files being imported. On Synology devices thumbnails and other metadata are stored in @eaDir directories throughout the filesystem. Yurij kindly submitted a pull request to exclude these folders and make the experience better for other Synology owners. Thank you Yurij!

Settings clean-up

As well as submitting the Synology fix above, Yurij Mikhalevich also fixed some duplication in our Django settings file. Thanks again Yurij.

For next month…

- Raspberry Pi / ARM support to be completed #67

- Photo uploading via browser #137

- Import fails when image has camera model but no camera make #196

- Handle moving/renaming files within photos directory #188

- Allow user to select preferred file where there are multiple PhotoFiles for a single Photo #193

I hope this round-up has been of some interest to you and congratulations if you made it this far. If you’d like to hear about future announcements please add your email address to the mailing list form below.

Thanks for reading,

Damian

Header photo by Okai Vehicles