We think you shouldn’t have to sacrifice privacy to keep your photo memories well organized and accessible from anywhere. We focus on design and explore the possibilities of artificial intelligence. We provide server software and mobile apps that understand your photos and present them in an intuitive way on any device. Photonix is and always will be an open source project.

Thanks for joining me for the latest rundown of new features. I’d summarise this one as being more about quality over quantity as there aren’t as many items to talk about compared to previous months. What we do have this time is one massive headline feature and a handful of simpler but still really useful ones.

You can experience the features for yourself by visiting the online demo. Some of the features will make more sense if you can run it yourself and load in your own photos. You can see our installation instructions for more details.

Face recognition and automatic grouping

GitHub issue #124

Here it is, the big one. The use case here is that you have photos of your family and friends and they get labelled so you can quickly find photos of a particular person. If you label your mum as being in a photo, then Photonix should also label other photos as your mum if the face is similar enough.

This machine learning model is the first in our toolkit to be trained locally to your unique dataset of photos — everyone’s libraries will have different people that they care about. However, only a small portion of it needs to be retrained which makes it very efficient and it can group faces together before you’ve even created any labels.

The feature was started several months ago with face detection but now recognition grouping and labelling makes it complete.

I believe having face recognition means Photonix is now a serious option for many more potential users. Users have been asking for this for months, if not years. There are several accuracy metrics produced by the original developers of the algorithms listed below which you can read up on. I suggest that the only real way to tell whether it is good enough is to try it on your own photo collection. We’d be interested to hear how you find it.

You can read more about the full implementation and the algorithms used in our Image Analysis documentation.

Here is a a summary of the main steps:

- Detect face positions and sizes in photos that are imported.

- Crop, transform and align face to correct for people positioning their heads differently.

- Compute embedding “fingerprint” for each face image.

- Build a Nearest Neighbours similarity search index to quickly compute distance between face embeddings. If two faces are close enough together, tag them as being the same person.

- Provide user interface so a human can label, accept or reject the face groups that were found.

Key technologies used:

- MTCNN — Neural network for detecting faces and key features within (Tensorflow implementation)

- FaceNet — Neural network developed by Google for producing face embeddings (Tensorflow implementation)

- Deepface — Python library that provides transformation functions and a framework for easily using different embeddings

- Annoy — Approximate Nearest Neighbours library produced by Spotify

- Pillow — Imaging library used for cropping and resizing

- Numpy — Fast numeric processing of image data

Search auto-completion

GitHub issue #226

This is a big UI improvement that should help users find photos faster. Where previously they would have to scroll horizontally through the filters to find existing tags, now they can just start typing. This matches for manual tags that have been added or any of the automatic features detected or recognised by our machine learning algorithms. You can even combine this with the new face recognition feature highlighted above.

When you start typing in the search box, any partially matching tags will be displayed below. The icon for tag type is displayed on the left of the tag name to make it easier to identify. The full tag type is also displayed on the right side. To select one of the suggested tags you can either click/tap it or use your keyboard arrow keys to go up and down and then press enter.

There was some suggestion on the Linux Unplugged Podcast that the user interface can look a bit cluttered and I assume this is due to the display of filters. Now we have auto-completion, we don’t have to depend so much on showing the filters. I’ve started work on some refined designs for the UI so this gives us more options for what to display at what time and make things cleaner.

Commands for creating additional users and assigning to libraries

GitHub issue #194

The Photonix backend was designed to support multiple users and multiple libraries from an early stage. However it has been very difficult for an end user to add these. This new command-line utility gets us a step closer to resolving this.

We recommend you go through the onboarding process in the browser to set up your admin user and initial library first. Running the command will ask you for a username and password to create an account. Once the additional user has been created you will be asked if you want to assign them to any of your libraries.

See the Users and Libraries documentation for instructions on using the commands.

If you were setting up Photonix for your family you would go through the web-based setup to create your own account as the admin and create the shared library. You can then create additional libraries using the create_library command. Finally use this new create_user command to create each member of your family.

We will be adding a more user-friendly way of doing these things in the user interface but hopefully this improves the situation for those that are interested in having a multi-user or multi-library set-up.

Date-based event tagging

GitHub issue #157

The GitHub user @anoma made the suggestion earlier in the year that we could automatically recognise and tag certain events based on the date when the photo was taken. This is our first attempt at a new event recognition model serving as a foundation for supporting more event types later.

Some types of event are easier to make an assumption about than others. The easier types of events are celebrated globally and have well-defined time and date ranges. We have implemented only the easiest ones for now but will be improving the intelligence, adding more localised celebrations in future. The events that we now tag are: Christmas Day, New Year, Halloween and Valentine's Day. We recognise that these are skewed to a western perspective and are not celebrated the world over but believe there is a large overlap with most of our audience. Hopefully users who do not celebrate these events will not be too inconvenienced.

You can see a list of the other events that have been proposed. Many of these require knowledge of where the photo was taken. The most obvious way of identifying location would be from GPS coordinates embedded in metadata but many devices do not support this or have it turned on. Another option could be to try and identify a time zone in the metadata and assume location or country based on this.

There was also the suggestion of tagging events that are specific to only the people involved, for example birthdays. We could combine with other models such as object detection to identify a cake with candles or colour detection for St. Patrick’s Day (green).

If you have ideas for ways to improve this feature or suggestions for other events/celebrations then please add them to the issue.

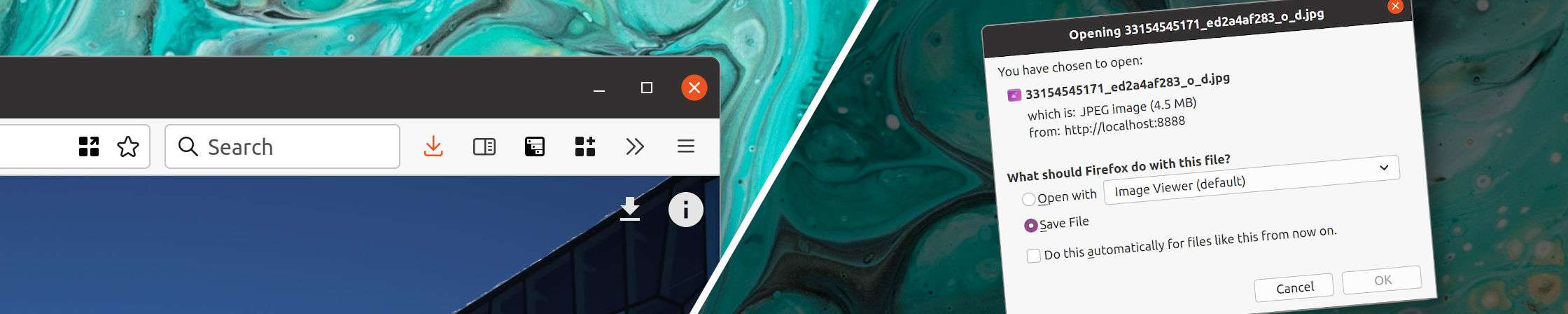

Ability to download photos from within browser

GitHub issue #259

This is an option that was suggested to me a few times by people who had given Photonix a try. They wanted to be able to download their chosen photo from within the browser/UI. This makes sense as people might be using a device which doesn’t have access to their photo library in any other way. Even if they are on a desktop machine that has the photos stored on the same device or mounted to the filesystem it may just be quicker to download where you are viewing it.

It should be very simple to use, just look for the download icon in the top-right corner when viewing the photo (next to the info icon).

The file that gets downloaded will be the original version that was uploaded — no scaling, compressing or conversion.

Map view state now restored after returning from detail view

GitHub issue #255

Map view has been slightly inferior to the timeline view up until now. This is because when you were to click a thumbnail from the map and then return, the map got reset to its initial state, meaning it was zoomed out and not positioned where you left it.

Now, every time the map is dragged or zoomed, we extract and save the state. When the map is loaded again, any saved state is used to set it up as it was.

This should mean map view can be used more seriously and will cause less frustration. There are some other changes coming to improve map view a bit more. Mainly, we intend to show small thumbnails of photos rather than the pins that you have to click to see a thumbnail. The result will mean that thumbnails are smaller but you’ll be able to see more at a glance and hopefully find what you’re looking for more quickly. You can follow progress on this in issue #265.

Animated hint to show that there are more filters available

GitHub issue #228

Some users on some devices were not realising they could scroll horizontally to get to more filters. Now we show a one-time animation to signify that the panel can be swiped to the left.

Sponsorship

In an effort to make the Photonix project more sustainable, we are now accepting monthly donations through GitHub Sponsors. It was a surprisingly lengthy process to get our company verified by Stripe and approved by GitHub.

If you appreciate the work we’ve put in so far and like the direction we are going in, we would really appreciate you taking a look at our sponsorship page. At time of writing, you could literally be our number one sponsor!

We want to help you explore your photo collection, access it quickly from any device and retain your privacy. Your support will help keep the project running and push us to invest even more time and effort. Thank you.

Coming soon…

- Manual rotation of photos #211

- Allow photos to be uploaded via web browser #137

- Reduce classifier job memory consumption #83

- Progress notifications #192

I hope you enjoyed this update and are excited by what’s happening with the project. If you want to keep up-to-date make sure you’re signed-up to the mailing list using the form below.

Thanks for reading,

Damian

Header image by Possessed Photography

Photo used to demonstrate our face recognition UI by Ayo Ogunseinde

Other face recognition images from the linked to papers

Background images (acrylic and ink) by Pawel Czerwinski